|

MANTRA

Monitoring Multicast on a Global Scale: Project Overview, History,

Publications and Results

|

|

|

Project Overview:

MANTRA (Monitor and Analysis of Traffic in Multicast Routers) is a tool for the global monitoring of the multicast infrastructure at the network layer. MANTRA was developed by Prashant Rajvaidya while pursuing his Ph.D. in the Department of Computer Science at the University of California Santa Barbara and during his internship at the Cooperative Association for Internet Data Analysis (CAIDA) at the San Diego Supercomputing Center (SDSC). MANTRA, as well as the rest of Prashant's Ph.D. work, was supervised by Kevin C. Almeroth and supported by grants from Cisco Systems and the National Science Foundation (NSF).

MANTRA collects data from multiple routers, aggregates collected data to generate global views, and processes these views to generate monitoring results[4]. Data is collected from the routers by logging into the routers and capturing their internal memory tables[5]. The type of data collected from these routers ranges from routing tables for MBGP and DVMRP, to forwarding tables for PIM, to source announcements for MSDP[6].

Results from MANTRA have been used to monitor various aspects of multicast including multicast deployment[3], network topology characteristics[2], MSDP operation[1][4], and data distribution trees[4]. Some of the applications of these results include traffic analysis, route analysis, analysis of host-group behavior, and routing problem debugging.

|

Ongoing Data Collection:

During the first 5 years of its operation (1998-2003), MANTRA was used to monitor 18 routers including several key exchange points and border routers for transit providers. During this period, the granularity of monitoring results was 15 minutes; i.e. fresh data was collected and results were updated at 15 minute intervals. These results were available in near real-time online in the form of descriptive tables, static plots, interactive topology maps, and interactive geographic visualization.

Since 2003, the scope of MANTRA has been limited to collecting only MBGP information from a few routers in the Internet2 backbone. The goal behind this data collection is to conduct a long-term study of the evolution of multicast. We have, however, discontinued real-time data processing and the real-time results are no longer available.

|

Project Publications:

Listed below are the publications that have been generated by this work:

- Multicast Security

- Multicast Routing Analysis

- [2] P. Rajvaidya and K. Almeroth, "A Study of Multicast Routing Instabilities", IEEE Internet Computing, vol. 8, num. 5, pp. 42-49, September/October 2004. [Text Abstract]

- [3] P. Rajvaidya and K. Almeroth, "Analysis of Routing Characteristics in the Multicast Infrastructure", IEEE Infocom, San Francisco, California, USA, April 2003. [Text Abstract]

- [4] P. Rajvaidya and K. Almeroth, "Building the Case for Distributed Global Multicast Monitoring", Multimedia Computing and Networking (MMCN), San Jose, California, USA, January 2002. [Text Abstract]

- MANTRA Design and Implementation

- [5] P. Rajvaidya and K. Almeroth, "A Router-Based Technique for Monitoring the Next-Generation of Internet Multicast Protocols", International Conference on Parallel Processing (ICPP), Valencia, Spain, September 2001. [Text Abstract]

- [6] P. Rajvaidya, K. Almeroth and k. claffy,

"A Scalable Architecture for Monitoring and Visualizating Multicast Statistics", IFIP/IEEE Workshop on Distributed Systems: Operations & Management (DSOM), Austin, Texas, USA, December 2000. [Text Abstract]

|

Important Results:

Over the lifetime of MANTRA, we have collected over 3 million routing

tables from 19 different locations accounting for approximately a terabyte of data.

We have analyzed this data to track multicast evolution, estimate

usage, monitor topology changes, and troubleshoot routing problems.

The following are some of the key findings and results based on our work[2][4]:

|

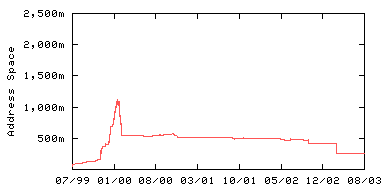

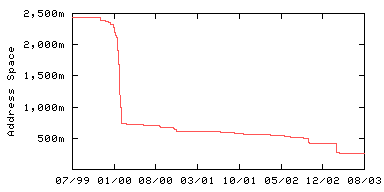

| The Size of the Active Infrastructure

The figure on the right shows the active

address space. We define active address space as the number of

addresses that have been announced at least once prior to the measurement

period and will be announced at least once after. The following are the key conclusions

from these results:

- Deployment doubled during the 4 year period shown in the graph, to about 40 million

addresses.

- Deployment had been slowly but consistently decreasing since July

2001 (but still twice what it was from when we first started collecting data in 1999).

|

Active multicast address space

(Infrastructure Size). |

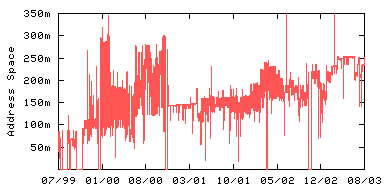

| Connectedness of the

Infrastructure

The figure on the right shows the

connected address space. We define connected address space as the

number of

addresses represented by valid route announcements and, therefore, connected

to the MBGP topology during each measurement interval. While active address space

(above) represents all the

addresses that can possibly be connected, connected address space

represents all the addresses that are actually reachable during

the measurement instance. The following are the key conclusions

from these results:

- Significant Increase in Infrastructure Connectivity:

Connectivity was poor between 1999 and 2001

because only a fraction of active address

space was connected at any one time. Towards the end of the measurement

period, however, connectivity increased significantly with over 95% of the

the active address space connected to the MBGP topology at any given

instance.

- Routing Stability Problems:

Routing was not particularly stable during much of the 4 year period because

connectedness within the infrastructure varied to a high degree. This instability

created significant problems for application developers and network administrators.

As a result, application developers and network providers tried multicast but did

continue to use it.

|

Address space

connected to the Infrastructure. |

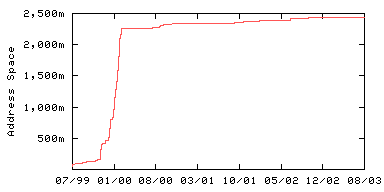

| Growth in Native

Multicast Deployment

The figure on the right shows the growth of the

multicast infrastructure. This graph shows a line that increases each time a

new address or group of addresses is first announced via an MBGP route

announcement. Growth results show that there is a clear rise in the size of

the infrastructure. The following are some of the key observations and conclusions:

- The amount of address space has grown by nearly 50 times over the

four year period from 50 million to about 2.5 billion addresses.

-

Up until March 2000, growth has been in spurts.

- Only gradual growth has

occurred since then.

- Only small networks have joined the topology recently.

|

Growth of the

MBGP-reachable address space. |

| Loss in Native Multicast Deployment

The figure on the right shows the

loss of networks in the infrastructure. That is, networks that stopped

announcing their MBGP routes. This graph takes as its starting point the

total number of addresses announced via MBGP over the four year measurement period. The

graph then decrements this number when an MBGP advertisement is observed for the last time. The basic

idea is: "growth

shows the cumulative number and timing of newly advertised address space

while

loss shows when address

space stops being advertised". The following are some key observations

and/or conclusions:

- There was significant loss (92%) during the 4 year period.

- Most of the

networks lost were the unstable and transient. This loss occurred because system

administrators could not maintain an acceptable level of service, multicast

was essentially turned off.

- The most

significant drop occurred in March 2000 during the dismanteling of the MBone.

That is, as use of the DVMRP-based MBone declined, fewer service

providers were willing to offer tunnels. And because many service providers did

not offer native multicast, many (public) Internet users had no way of

receiving multicast content.

|

Loss of the

MBGP-reachable address space. |

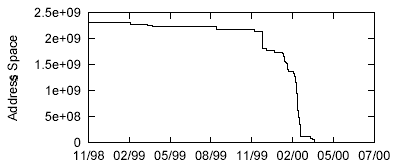

| The End of MBone

As the tunnel-based MBone was replaced with

native multicast, the MBone essentially ceased to exist. The figure on the right shows the loss in the

address space reachable via the DVMRP-based MBone at FIXW between November 1998

and July 2000. The graph in this figure takes as its starting point the

total number of unique addresses that were advertised via

DVMRP during the collection period.

The graph then decrements at the point

in time when each address stops being advertised. This figure confirms that

the use of DVMRP started to decline in February of 1999 and almost

completely ceased by March 2000.

|

Loss of the DVMRP-reachable address space. |

|